How Generative AI Works

How Generative AI Works is one of the most fascinating questions in modern technology today. Over the last few years, I’ve seen AI move from experimental labs into everyday tools used by developers, analysts, and IT professionals across the world. Tools like ChatGPT have suddenly made artificial intelligence feel accessible, practical, and even conversational.

In this article, I will walk through how generative AI actually works behind the scenes, what powers tools like ChatGPT, and why this technology is transforming enterprise IT faster than many professionals expected.

Table of Contents

Understanding the Core Idea Behind Generative AI

At its core, generative AI is about pattern recognition and prediction.

The system studies enormous volumes of text, images, or data and learns patterns within them. Once trained, the model can generate entirely new content that follows those patterns.

This is why generative AI can:

- write articles

- generate code

- answer questions

- summarize documents

- produce creative ideas

From my experience, the easiest way to think about generative AI is this:

It works like an extremely advanced autocomplete system.

But instead of predicting the next word in a message, it predicts entire sentences, paragraphs, or ideas.

The Role of Neural Networks in Modern AI

Modern generative AI systems rely heavily on neural networks, a technology inspired by the human brain.

A neural network is essentially a collection of interconnected mathematical functions designed to detect patterns within data.

- Multi-layered processing: Information flows through various layers, which is why we call this “deep learning”.

- Relationship Mapping: The network learns how words relate to each other not just as characters, but as meanings.

- Contextual Understanding: It identifies how the meaning of a word like “bank” changes depending on whether it’s near the word “river” or “money”

These networks can process information across multiple layers, which is why they are often referred to as deep learning systems.

Neural networks enable AI systems to:

- understand language patterns

- recognize relationships between words

- learn context within sentences

- generate coherent responses

Without neural networks, modern generative AI would not exist.

In Simple Words:

A neural network is like a digital brain that learns patterns from large amounts of data and uses those patterns to make predictions.

What Large Language Models Actually Do

The technology behind ChatGPT is known as a Large Language Model (LLM).

Large Language Models are trained on vast collections of written content to understand how language works.

These models learn:

- grammar structures

- word relationships

- sentence patterns

- contextual meaning

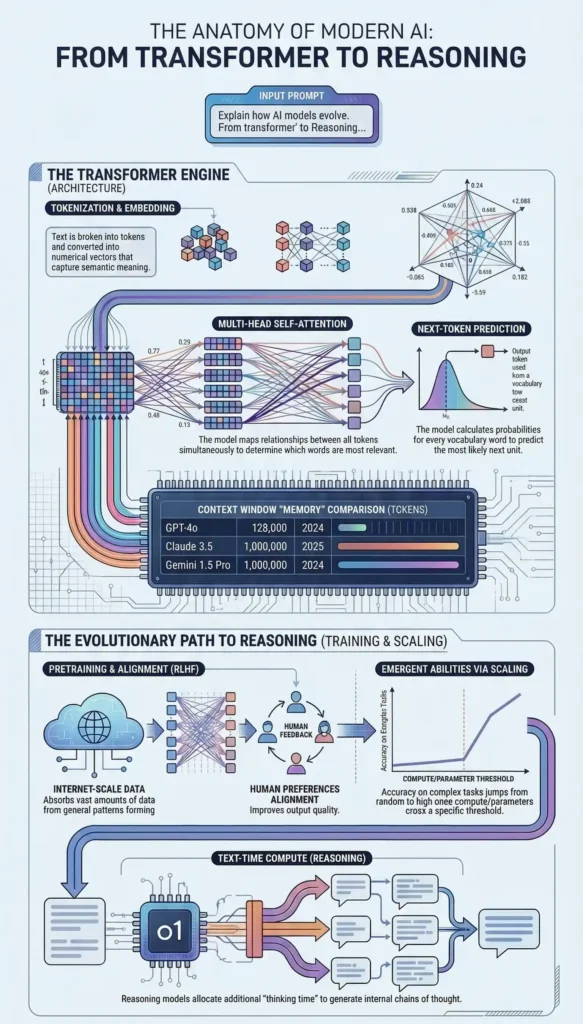

One of the most widely known LLM architectures is the Transformer architecture, which enables AI systems to process language with remarkable accuracy.

From my experience, this is where generative AI truly becomes powerful.

Instead of simply identifying keywords, LLMs understand the context of language.

That capability is what allows tools like ChatGPT to produce surprisingly human-like responses.

How ChatGPT Generates Human-Like Responses

When you ask a question to ChatGPT, several steps happen behind the scenes in milliseconds.

The process generally looks like this:

User Prompt

You provide a question or instruction.

Tokenization

The AI breaks the sentence into smaller units called tokens.

Pattern Analysis

The model analyzes relationships between tokens using neural networks.

Prediction

The system predicts the most likely next token.

Response Generation

Tokens are combined to form sentences and paragraphs.

The process repeats until the full response is generated.

What appears to be intelligence is actually a highly advanced prediction engine.

From My Experience:

Many people assume ChatGPT “knows” the answers it provides. In reality, it predicts the most statistically likely response based on patterns learned during training.

Understanding this distinction helps professionals use AI tools more effectively.

Training Data: The Foundation of Generative AI

Generative AI models require massive datasets during training.

These datasets typically include:

- books

- articles

- technical documents

- websites

- academic research

- programming code

The model processes this information and learns patterns within language.

From my experience, data quality plays a critical role here. Poor data leads to poor AI outputs.

This is why modern AI systems invest heavily in data cleaning, filtering, and optimization during training.

Tokens, Context, and Prediction in AI Models

To understand how generative AI works, we must look at tokens and context.

A token is simply a unit of text.

For example:

Sentence:

Generative AI is transforming technology.

Tokens might be:

- Generative

- AI

- is

- transforming

- technology

AI models analyze token relationships to determine meaning and context.

Context is extremely important because language meaning changes depending on surrounding words.

Modern AI models maintain a context window, allowing them to remember earlier parts of the conversation while generating responses.

Reinforcement Learning and AI Improvement

Another important component in generative AI systems is Reinforcement Learning with Human Feedback (RLHF).

This technique improves AI responses by incorporating human evaluation.

The process works like this:

- Humans review AI responses

- They rank the best responses

- The system learns from these rankings

- The model improves future responses

From my experience, this step is critical for making AI systems useful in real-world scenarios.

Without human feedback, AI models would often produce confusing or irrelevant answers.

Enterprise Applications of Generative AI

Generative AI is rapidly transforming enterprise environments.

Many organizations are now using AI for:

- software development assistance

- automated documentation

- customer support chatbots

- knowledge management

- business intelligence summaries

- IT operations automation

This reminds me of the early days of cloud computing.

At first, companies experimented with small deployments.

Then suddenly, adoption accelerated across the entire industry.

We are seeing the same pattern with generative AI.

Limitations and Real-World Challenges of Generative AI

Despite its impressive capabilities, generative AI has several limitations.

Some of the most important challenges include:

Hallucinations

AI sometimes generates incorrect information.

Bias

Training data may contain biases.

Data Privacy

Sensitive enterprise data must be handled carefully.

Computation Cost

Training large models requires enormous computing resources.

From my experience, organizations adopting AI must balance innovation with responsible implementation.

Final Thoughts: What This Technology Means Going Forward

Generative AI is genuinely different in its pace and breadth. The Transformer architecture, the scale of training, the emergent capabilities that arise from that scale — these represent a real discontinuity from what came before. Not a bigger version of the last thing. Something categorically new.

But the fundamentals of working with it effectively — clarity of problem, quality of data, rigour of governance, honesty about limitations, and respect for the humans whose work it touches — those fundamentals have not changed at all.

How generative AI works, at the deepest level, is this: it is a very large, very fast, very capable system for finding and applying patterns in human knowledge. It can do this at a scale and speed that humans cannot match. What it cannot do — and what remains irreplaceably human — is understand the full context of why a decision matters, take genuine accountability for the consequences, and navigate the organisational complexity of making something work in the real world.

That combination of AI capability and human judgment is not a compromise. It is the actual model. And from where I sit, it is the most interesting professional landscape I have worked in during two decades of building and managing technology.

The best thing you can do — regardless of where you are in your AI journey — is to use the tools. Not just read about them. Use them on real problems, in real contexts, with real consequences. That is the only way the map becomes a place you actually live in.

Frequently Asked Questions (FAQ)

How does generative AI work?

Generative AI works by training neural networks on massive datasets to identify language patterns. When a user provides a prompt, the model predicts the most probable sequence of words based on those patterns, generating new text that appears natural and meaningful.

How does ChatGPT generate answers?

ChatGPT analyzes a user prompt, converts the text into tokens, and predicts the most likely next words using a large language model. This prediction process repeats rapidly until the model generates a complete and coherent response.

What is a Large Language Model?

A Large Language Model is an AI system trained on massive text datasets to understand and generate human language. These models use neural networks and transformer architectures to process context, grammar, and relationships between words.

Why is generative AI important?

Generative AI enables machines to create content, automate complex tasks, and assist humans in decision-making. It has significant applications across industries including IT, healthcare, finance, and customer support.

Is generative AI safe for enterprise use?

Generative AI can be safe for enterprise environments when implemented with proper governance, security controls, and human oversight. Organizations must carefully manage data privacy, bias risks, and model accuracy.

Also visit my blog page on ” Know it all series for what is AI ” which covers detailed explanation of AI.