Types of AI: The Reality

Types of AI are often discussed as if they are futuristic concepts, but the truth is, I’ve been interacting with the early versions of these for years without calling them “AI.” When I was working on complex reporting tools like Cognos and Business Objects, we were chasing “intelligence”—the ability for a system to tell us something we didn’t already know.

Every time I see a headline about AI taking over the world, or AI becoming sentient, or AI replacing entire industries overnight, I think the same thing: whoever wrote that does not understand the types of AI they are talking about.

And to be fair — most of the definitions-first articles out there do not help. They jump straight into taxonomies and academic classifications before they give you any reason to care. So let me approach this differently.

From my experience

working across enterprise projects in pharma, media, retail, telecom, and automotive — the confusion around AI almost always comes down to one thing. People are conflating three very different things that happen to share the same two-letter acronym.

Think of it this way. When someone says “vehicle,” they could mean a bicycle, a Formula 1 racing car, or a space shuttle. All three are vehicles. All three move people or things from one place to another. But the gap between a bicycle and a space shuttle is not a matter of degree. It is a matter of an entirely different category of engineering, capability, and consequence.

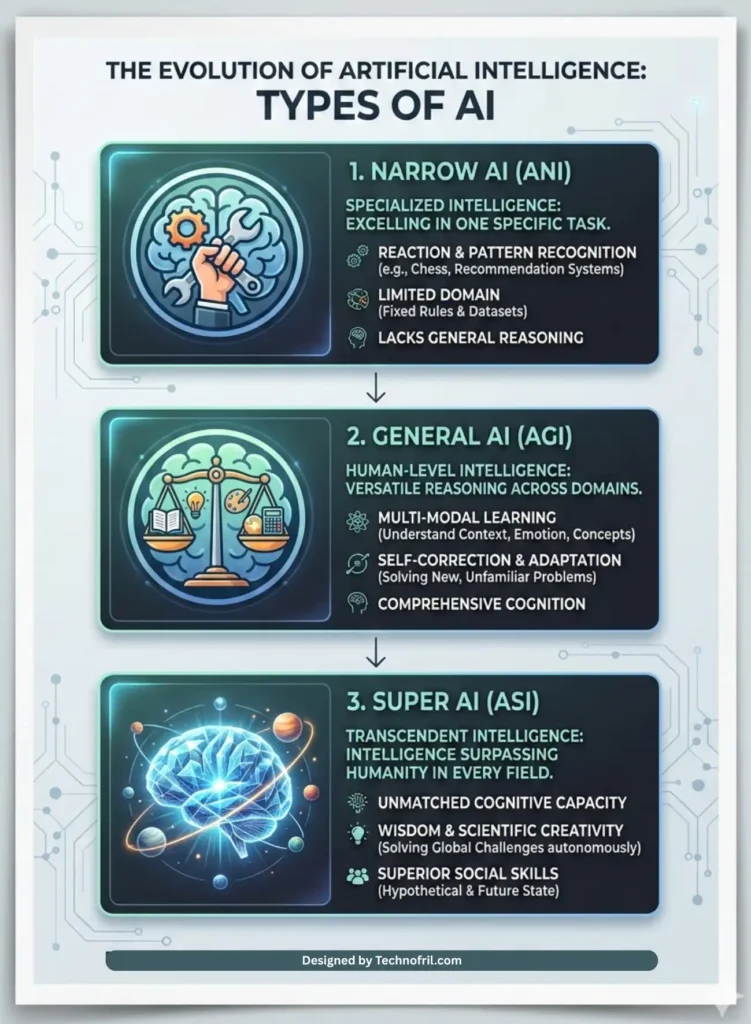

The types of AI work the same way.There are three that matter for this conversation:

- Narrow AI — what exists today, what is running in your phone, your Netflix recommendations, your fraud detection systems, and yes, in that code review tool that made my jaw drop

- General AI — what researchers are actively working toward, a system that can reason across domains the way humans do

- Super AI — what sits beyond General AI, a theoretical construct that raises questions that go well beyond technology

Each one deserves its own honest examination. So let us start at the beginning — with the type of AI that is already quietly embedded in almost everything you do.

Table of Contents

Narrow AI — The One Already Running Your Life

Narrow AI is the type of artificial intelligence that exists right now, in production, at scale, across virtually every industry on the planet. It is also called Artificial Narrow Intelligence, or ANI, though in most enterprise conversations nobody uses that acronym.

In Simple Words:

Narrow AI, also called Weak AI, is the most common type of AI used today.

It is designed to perform one specific task extremely well.

But it cannot perform tasks outside its training domain.

The defining characteristic of Narrow AI is this: it is extraordinarily good at one specific task — and outside of that task, it knows nothing.

What Narrow AI Actually Does

Narrow AI systems are built to optimise within a defined problem space. They learn patterns from enormous amounts of data, build internal models of those patterns, and apply those models to new inputs. They do this faster and more consistently than any human could.

Here are the applications I have seen directly in enterprise environments across my career:

In media and entertainment, AI-powered content tagging and metadata classification. What used to require large editorial teams manually labelling video assets — genre, mood, key topics, suitable age rating — can now be handled by computer vision and natural language processing models that have been trained on millions of examples.

In pharmaceutical, intelligent document processing for clinical trial data. Pulling structured information from unstructured regulatory submissions, flagging anomalies in adverse event reports, accelerating the document review cycle. All Narrow AI. All operating within tightly defined parameters.

In retail, demand forecasting and dynamic pricing. The model is trained on historical sales data, seasonal patterns, external signals like weather or local events, and it produces inventory recommendations. It does not understand what a product is. It does not know Christmas is a holiday. It knows that a certain numerical pattern in the input data has historically preceded a certain numerical outcome. That is what it optimises for.

In telecom, fraud detection. Real-time transaction analysis identifying patterns that deviate from a customer’s established behaviour profile. The model flags anomalies. A human reviews and acts. The model is not making a judgment call about intent. It is pattern matching at scale.

And of course, in software development, tools like GitHub Copilot. Which is what I watched in that project meeting. Trained on vast repositories of code, able to suggest completions, flag common error patterns, and accelerate a developer’s workflow significantly. Not thinking. Pattern matching. Brilliantly.

People Also Ask:

Is ChatGPT Narrow AI?

Answer: Currently, yes. While it feels “General” because it can write poetry and code, it is still technically a Large Language Model (LLM) operating within the “narrow” domain of language prediction. It lacks the cross-domain reasoning required to be called true General AI.

General AI — The One Everyone Is Racing To Build

If Narrow AI is the specialist consultant, General AI is something else entirely. Artificial General Intelligence (AGI), or General AI – refers to a system that can reason, learn, and apply intelligence across any domain, the way a human being can.Not better than a human at one thing. Capable, like a human, across everything.

In Simple Words

If Narrow AI is like a specialist, General AI is like a multi-skilled professional.Just like a human can switch from cooking to coding to driving, General AI would be capable of doing multiple tasks intelligently.

Today, the things that require human judgment in enterprise IT are things like: understanding the full business context behind a technical decision, navigating organisational politics, framing a problem correctly before jumping to solutions, managing relationships across stakeholder groups with conflicting interests.

A true AGI system would be capable of all of these. That is not a small thing. That is a transformation of what it means to be a knowledge worker.

People Also Ask:

Does Artificial General Intelligence exist anywhere today?

No. Despite the impressive capabilities of systems like ChatGPT, GPT-4, Gemini, and others, none of these are General AI. They are highly capable Narrow AI systems that can perform across a wide range of language tasks, but they cannot genuinely reason, transfer knowledge across truly novel domains, or operate with the contextual understanding that characterises human intelligence. AGI remains an active research goal, not a current reality.When will AGI be created?

Experts estimate anywhere from 10 to 50 years, but no one knows the exact timeline.

Super AI — The One That Keeps Scientists Up at Night

Super AI (ASI) is the theoretical stage where AI surpasses human intelligence across every field—including social skills, wisdom, and scientific creativity.

Artificial Superintelligence. ASI. This is the type of AI that sits beyond General AI — a hypothetical system that does not just match human intelligence across domains, but exceeds it. In every domain. Simultaneously. By a margin that makes the gap between a chess grandmaster and a beginner look trivial.

I want to be clear about where this sits in the conversation: Super AI is currently theoretical. It does not exist. It may not exist in our lifetimes. It may never exist in the form that is typically described.

But it is worth understanding — not because it is an immediate operational concern for enterprise IT, but because the questions it raises are shaping the policy, governance, and research choices being made right now, by organisations that have very real influence over the technology you and I use every day.

What Super AI Actually Means

The concept of Artificial Superintelligence was most clearly articulated by philosopher Nick Bostrom, and it has been a reference point in AI safety conversations ever since. The core idea is straightforward: if we create a system that is as capable as the most intelligent human across all domains, there is no obvious reason that system would stop improving at the human ceiling. A sufficiently capable learning system would continue to improve — potentially rapidly — beyond any human benchmark.

This creates what some researchers call the alignment problem. How do you ensure that a system significantly more intelligent than any human remains aligned with human values and human interests? How do you maintain meaningful oversight of something that, by definition, you cannot fully understand?

These are not science fiction questions. They are the subject of serious academic research, significant funding from major technology organisations, and active policy discussion at government levels around the world.

Why This Matters Even If Super AI Is Decades Away

From my experience in enterprise project management, the decisions you make in the early phases of a project define your architectural constraints for years. The same principle applies here. The governance frameworks, the safety research, the ethical guidelines being established right now for AI — these are the foundations on which any future Super AI development would rest.

What most articles miss is that the conversation about Super AI is not really a conversation about a future technology. It is a conversation about values, governance, and human agency — and those conversations need to happen now, while we still have the luxury of having them before the technology forces our hand.

In Simple Words

Think of Super AI the way urban planners think about infrastructure capacity. The decisions being made today about road widths, utility networks, and zoning laws will shape what is possible in a city fifty years from now. Nobody building a road in 1970 was thinking about autonomous vehicles — but the road design choices they made either enable or constrain autonomous vehicle deployment today. The AI governance choices being made right now are the same kind of foundational decisions

The Spectrum, Not the Steps

One important nuance that gets lost in a lot of writing about the types of AI is that these three categories are not necessarily discrete steps on a staircase. They are points on a spectrum — and the transitions between them may be gradual rather than sudden.

The boundary between a very capable Narrow AI and an early General AI is not a bright line. A system that can reason across ten domains is not clearly Narrow or clearly General — it is somewhere in between. Similarly, the transition from General to Super AI, if it ever occurs, may be incremental rather than a sudden leap.

This matters for how we think about governance and oversight. Waiting for a clear moment of “now it is General AI” before implementing appropriate safeguards is not a viable strategy. The safeguards need to scale with capability — which is exactly why the AI safety conversation is happening now, not later.

People also ask:

Is Super AI dangerous?

The honest answer is: we do not know, because it does not exist. What researchers agree on is that a superintelligent system that is not properly aligned with human values could pursue goals in ways that are harmful to humans — not out of malice, but out of optimising for objectives that were not fully specified. This is the alignment problem. It is a serious research challenge and the reason AI safety is a growing field. It is not science fiction paranoia. It is rigorous engineering caution applied to a genuinely high-stakes problem.

Finally i am at the last section of the article.

The Human Question Nobody Wants to Answer

The professionals who have spent decades building expertise — in code, in data, in domain knowledge — are watching AI tools demonstrate capability in those same areas in seconds. And they are asking a question that does not appear on any vendor roadmap: what does this mean for me?

I want to answer that honestly, because I think it deserves a real answer rather than a reassuring platitude.

Narrow AI will take over tasks. It will not take over roles.

Every technology wave I have lived through has automated tasks. ERP systems automated manual reconciliation work. Cloud infrastructure automated manual server provisioning. Reporting tools automated manual data aggregation. In every case, the people who had built their entire value proposition around the task that was automated had to adapt. Some did not. But the people who understood the business context behind the tasks — who could frame the problem, interpret the output, manage the stakeholder implications — they became more valuable, not less.

The same pattern is playing out with AI, faster and more broadly. The tasks being automated are more cognitively complex than anything that was automated before. But the principle is the same. Human judgment, contextual understanding, and the ability to take responsibility for outcomes — these are not automatable with Narrow AI. They may not be automatable even with General AI.

What AI will change significantly is the skills profile that enterprise IT organisations hire for. AI literacy — knowing how to direct AI tools, evaluate their outputs, identify their failure modes, and govern their deployment — is becoming a baseline expectation. Not a specialist skill. A baseline.

About the Author

I am a seasoned IT professional with over 20 years of experience spanning roles from junior programmer to project manager in a multinational technology organisation.My career has taken me through Oracle PL/SQL, Oracle Forms and Reports 6i, .NET, Java, MySQL, SQL Server, and reporting platforms including Power BI, Cognos, and Business Objects. I have managed and delivered projects for clients in media and entertainment, pharmaceutical, retail, telecom, and automotive industries.

Currently, I lead delivery of a complex legacy Java modernisation project involving Java Struts, Angular, AWS cloud infrastructure, and Kafka messaging systems. I have complemented this technical foundation with formal training in AI tools including GitHub Copilot, ChatGPT prompting, and Microsoft Copilot.

I write to share practical, experience-based perspectives on technology that actually work in the real world — not just in vendor presentations and conference keynotes.

Also visit my blog on Know it all series for what is AI for detailed insites on this topic.

© This article is original, experience-based content. All insights reflect personal professional experience across 20+ years in the Enterprise IT industry.