AI in Cybersecurity Today – Introduction

Something changed in enterprise cybersecurity, and it did not arrive with a warning.

I have watched technology waves roll in and reshape how we work. But what is happening right now in cybersecurity is different from anything I have seen before.

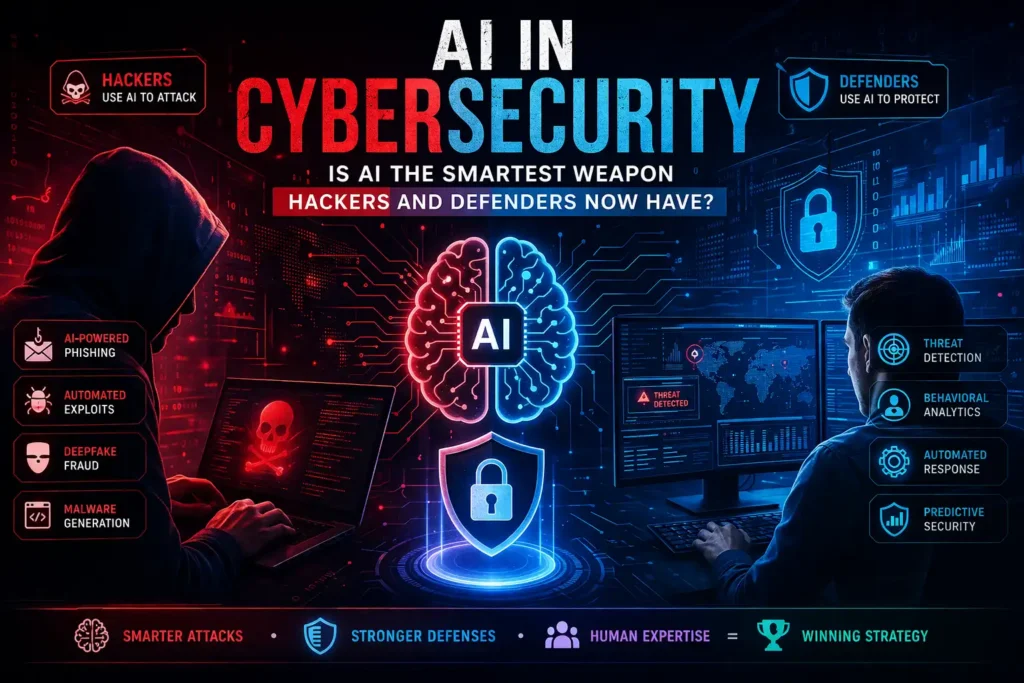

The same AI tools that your security team is using to detect threats faster than any human analyst could, hackers are using to craft more convincing phishing emails, discover vulnerabilities at machine speed, and impersonate your CEO with a voice clone.

The role of AI in cybersecurity is no longer a future conversation. It is the conversation happening right now in every enterprise boardroom, every security operations centre, and every IT risk committee I have been part of.

This article is my honest, experience-driven take on both sides of that equation.

Table of Contents

What Is the Role of AI in Cybersecurity Today

The role of AI in cybersecurity has fundamentally changed over the past decade. Earlier, AI was mostly used for analytics dashboards or anomaly detection experiments. Today, it is embedded deeply into real-time security operations.

This shift has turned AI from a “nice-to-have” feature into a core security layer.

How AI Has Shifted from Buzzword to Battlefield Tool

Not long ago, “AI in cybersecurity” meant a vendor slide deck with a neural network diagram and a promise of smarter threat detection. Most enterprise IT teams I worked with treated it as aspirational, something on the five-year roadmap, not the current sprint.

That conversation has completely changed.

Today, AI is operationally embedded in the tools that monitor your network traffic, analyse your endpoint behaviour, detect anomalies in your cloud infrastructure, and respond to incidents automatically without waiting for a human to review a ticket.

👉 From my experience,

the shift became undeniable when I started seeing AI-assisted tooling being deployed not as a pilot project but as a default component of enterprise security stacks. Teams that were previously drowning in alert queues were suddenly able to prioritise and correlate threats in near real time. The technology had moved from the demo room to the production environment.

What makes this moment distinct is not just that AI is being used in cybersecurity. It is that it is being used effectively by both sides simultaneously. Defenders have access to machine learning models that can identify threats faster than any human team. And attackers have access to those exact same foundational technologies.

That is the central tension that every enterprise IT leader needs to understand right now.

📌 In Simple Words

AI in cybersecurity means using machine learning, generative AI, and automation to detect, respond to, and predict cyber threats. The challenge is that attackers have access to these same capabilities. The race is not between humans and machines. It is between AI-powered defenders and AI-powered attackers.

The Speed Advantage – Why AI Changes Everything in Threat Response

Speed is the metric that matters most in cybersecurity. The average time between a breach occurring and a defender detecting it used to be measured in months. AI has compressed that window dramatically for organisations that have deployed it properly.

In a large enterprise environment like the ones I have worked in, with thousands of endpoints, multiple cloud environments, legacy on-premise systems, and Kafka-based data pipelines flowing between them, the volume of security telemetry data generated every day is simply beyond human capacity to manually analyse. AI-driven security platforms ingest, correlate, and score that telemetry continuously, surfacing what matters and suppressing what does not.

This is not an incremental improvement. It is a structural change in how threat response operates.

❓ People Also Ask Question:

How does AI improve cybersecurity threat detection speed? Answer: AI processes enormous volumes of security telemetry in real time, correlates patterns across endpoints and networks, and flags anomalies in seconds. Tasks that would take a human analyst hours to complete can be assessed and prioritised in near real time, dramatically reducing the window between a breach occurring and a defender responding.

How Hackers Are Using AI to Launch Smarter Attacks

One of the most alarming trends I’ve observed is how AI has upgraded phishing attacks.

Earlier phishing emails were easy to spot—poor grammar, suspicious tone.

Now? They are nearly perfect.

AI tools can:

- Mimic writing styles

- Generate personalized messages

- Analyze social media behavior

AI-Powered Phishing and Social Engineering at Scale

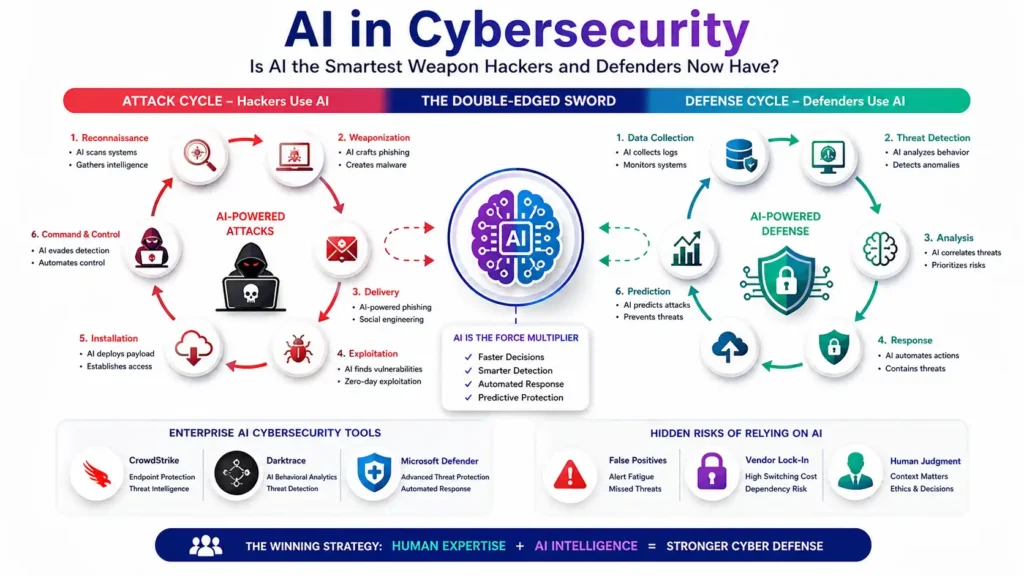

Phishing has always been the highest-volume, highest-success attack vector in the enterprise threat landscape. For years, the defence relied on training employees to spot poor grammar, suspicious links, and generic sender names. That defence is rapidly becoming obsolete.

Generative AI has given attackers the ability to produce personalised, grammatically flawless phishing emails at industrial scale. What used to require a native-language writer crafting individual messages can now be automated. AI models scrape public LinkedIn profiles, company websites, and social media to build context, and then generate targeted spear-phishing content that references real projects, real colleagues, and real business situations.

👉 From my experience

reviewing security incidents in enterprise environments, the emails that bypass user awareness training are no longer the obvious ones. They are the ones that feel like they came from a real colleague, reference a real initiative, and ask for something that sounds completely reasonable in context. That level of personalisation at scale is now AI-generated.

The barrier to launching sophisticated social engineering campaigns has collapsed. And that has direct implications for every enterprise IT and HR team responsible for security awareness programs.

📌 In Simple Words

AI does not just help hackers write better phishing emails. It helps them write personalised ones at volume, targeting hundreds of employees simultaneously with messages that feel real because they are built from real publicly available information about your organisation.

Automated Vulnerability Discovery and Zero-Day Exploitation

Finding vulnerabilities in software has traditionally been a time-intensive, expertise-heavy process. Security researchers and ethical hackers spend significant time manually reviewing code, running fuzz tests, and analysing application behaviour to find exploitable weaknesses.

AI has accelerated this process dramatically on both the offensive and defensive side.

On the offensive side, AI-powered tools can analyse codebases, identify patterns associated with known vulnerability classes, and generate exploit attempts automatically. What previously required a skilled penetration tester can now be partially automated. This means the window between a vulnerability being discovered and it being weaponised is shrinking.

Generative AI and Deepfake-Based Cyber Fraud

This is the attack vector that concerns me most from an enterprise risk perspective.

Deepfake technology, powered by generative AI, can now produce convincing audio and video impersonations of real individuals. We have already seen reported cases of attackers using AI-generated voice clones of executives to authorise fraudulent wire transfers. The CFO receives a call that sounds exactly like the CEO, requesting an urgent payment. The voice is cloned. The request is fraudulent. The money is gone.

In enterprise environments with complex organisational structures, distributed teams, and high volumes of routine approvals, this attack vector is genuinely dangerous. The technical sophistication required to execute it has dropped significantly as generative AI tools have become more accessible.

❓ People Also Ask Question:

How are hackers using AI to commit cyber fraud? Answer: Hackers use generative AI to produce deepfake audio and video impersonating executives, automate personalised phishing campaigns using scraped organisational data, and accelerate vulnerability discovery in software systems. These AI-powered capabilities allow attackers to operate at scale and speed that was previously impossible without large, skilled teams.

How Defenders Are Fighting Back with AI

While hackers are evolving, defenders are not standing still.

AI helps security teams:

- Monitor user behavior

- Detect anomalies

- Identify insider threats

AI-Driven Threat Detection and Behavioral Analytics

The most significant defensive application of AI in enterprise cybersecurity is behavioural analytics. Rather than relying purely on known threat signatures, which only catch attacks that have been seen before, AI models learn what normal behaviour looks like in your environment and flag deviations.

This matters because the most dangerous attacks are the ones that do not match any known signature. A compromised account behaving slightly differently from its baseline pattern. A service account querying data it has never accessed before. A lateral movement attempt that moves slowly enough to evade threshold-based rules.

AI models running continuously across your environment can surface these anomalies before they escalate.

📌 In Simple Words

Traditional cybersecurity tools look for known threats. AI-driven behavioural analytics looks for anything unusual, even if it has never been seen before. Think of it as a security guard who has memorised exactly how every employee in the building normally behaves and immediately notices when something does not fit.

Automated Incident Response and Containment

Speed of response is as important as speed of detection. When a threat is identified, every minute of delay represents additional exposure. AI-powered security orchestration platforms can now automate significant portions of the initial response workflow without waiting for human approval.

Isolating a compromised endpoint from the network. Blocking a suspicious IP address. Revoking access credentials for an account showing signs of compromise. Triggering a forensic snapshot of a suspicious process. These are actions that used to require a security analyst to review an alert, assess the situation, and manually execute a playbook. AI-driven platforms can execute them in seconds.

From a project management perspective, I think about this the same way I think about automated testing pipelines in software delivery. The goal is not to remove humans from the process. The goal is to remove humans from the parts of the process that do not require human judgment, so that human attention is concentrated where it genuinely matters.

Predictive Security – Stopping Attacks Before They Happen

The most advanced application of AI in enterprise cybersecurity is shifting from reactive to predictive. Rather than detecting threats that have already materialised, AI models can assess risk signals and identify the conditions under which an attack is likely to occur.

This includes threat intelligence feeds that use AI to correlate signals from across the internet, identifying when specific threat actor groups are becoming more active and targeting organisations in your sector. It includes vulnerability prioritisation models that assess which unpatched vulnerabilities in your environment are most likely to be exploited given current attacker behaviour patterns. It includes user and entity behaviour analytics that identify accounts showing pre-attack indicators before any actual breach occurs.

❓ People Also Ask Question:

Can AI predict cyberattacks before they happen? Answer: AI cannot predict attacks with certainty, but it can identify elevated risk conditions by correlating threat intelligence feeds, analysing vulnerability exposure, and detecting pre-attack behavioural patterns. Predictive security models give enterprise teams the ability to prioritise defensive actions before an attack materialises rather than responding after the damage is done.

Enterprise AI Cybersecurity Tools You Should Know

Modern enterprises rely heavily on AI-powered security tools.

Some key capabilities include:

- Endpoint protection

- Threat intelligence

- Behavioral analytics

CrowdStrike, Darktrace, Microsoft Defender — Real-World Capabilities

CrowdStrike Falcon is built around AI-powered endpoint detection and response. Its machine learning models analyse endpoint behaviour continuously and can detect and contain threats without requiring signature updates. In enterprise environments with thousands of endpoints across multiple operating environments, this is a significant operational advantage. The platform’s threat intelligence capabilities also give security teams visibility into attacker groups and campaigns targeting their industry.

Darktrace takes a self-learning approach, using unsupervised machine learning to build a model of normal behaviour for every user, device, and network connection in the enterprise environment. It then autonomously identifies and responds to deviations. The autonomous response capability, which Darktrace calls the Immune System, can take containment actions in real time without human approval. From a risk governance perspective, configuring the boundaries of that autonomy carefully is important.

Microsoft Defender for Endpoint, particularly in organisations already running Microsoft 365 environments, has become increasingly capable as Microsoft has invested heavily in AI-powered security features. The integration with Microsoft Sentinel for SIEM capabilities, and the way it surfaces signals across identity, endpoint, cloud, and applications in a unified view, makes it a strong option for enterprises already in the Microsoft ecosystem.

📌 In Simple Words

CrowdStrike is strong on endpoint protection and threat intelligence. Darktrace is strong on behavioural anomaly detection and autonomous response. Microsoft Defender is strong for enterprises already running Microsoft 365 and Azure environments. None of them is a complete solution on its own. The best enterprise security posture layers multiple capabilities.

What to Look for When Evaluating AI Security Platforms

From my experience sitting in vendor evaluations across multiple enterprise clients, these are the questions that separate useful AI security capabilities from impressive demo features.

How does the platform explain its decisions? AI models that surface threats but cannot explain the reasoning behind an alert create more work for analysts, not less. Explainability is a non-negotiable requirement in regulated industries like pharmaceutical and financial services.

What is the false positive rate in an environment like yours? Ask for reference customers in your industry and your infrastructure complexity. A platform that performs well in a cloud-native startup environment may behave very differently in a hybrid enterprise with legacy on-premise systems and complex network segmentation.

How does the platform integrate with your existing security stack? AI security tools that require ripping out your current SIEM or endpoint management platform to function create project risk that often outweighs the security benefit during the transition period.

What is the vendor’s data residency and privacy posture? In pharmaceutical and government-adjacent environments, the question of where your security telemetry data is processed and stored is a compliance requirement, not just a preference.

❓ People Also Ask Question:

What should enterprises look for in an AI cybersecurity platform? Answer: Prioritise explainability of AI decisions, realistic false positive rates in your specific environment, integration with your existing security stack, and clear data residency and privacy policies. A platform that performs well in a vendor demo must be evaluated against your actual infrastructure complexity before any procurement decision.

The Hidden Risks of Relying on AI for Cybersecurity

AI is powerful—but not perfect.

Common issues:

- False alarms

- Missed threats

- Over-alerting

When AI Gets It Wrong — False Positives and Alert Fatigue

AI-powered threat detection is dramatically better than pure signature-based detection. But it is not perfect. And in enterprise security operations, the cost of getting it wrong in either direction is significant.

False positives, alerts that flag legitimate activity as threats, create operational drag. Security analysts spend time investigating events that turn out to be nothing. Over time, that creates alert fatigue, the well-documented phenomenon where analysts start ignoring or dismissing alerts because the signal-to-noise ratio has become unsustainable.

I have seen this happen in enterprise environments with immature AI security deployments. The platform is implemented, alert volumes increase dramatically because the AI is flagging everything it does not recognise as anomalous, the security team is overwhelmed, and the alert queues start being cleared without proper investigation. That is a worse security posture than before the AI was deployed.

The answer is not to abandon AI-powered detection. The answer is to invest in the tuning and calibration work that makes AI detection accurate in your specific environment. That takes time and expertise, and it needs to be factored into the implementation plan.

📌 In Simple Words

An AI security tool that generates thousands of alerts a day is not a good security tool unless those alerts are mostly real threats. The implementation work that matters most is not the deployment. It is the tuning, the calibration, and the continuous refinement that makes the signal meaningful.

The Vendor Lock-In Problem in AI Security Platforms

This is the risk I raise in every enterprise AI discussion, regardless of the domain. AI security platforms from major vendors are sophisticated, integrated, and increasingly difficult to migrate away from once you have built your security operations around them.

The data your security platform ingests becomes training data for its AI models. The workflows your team builds around the platform’s alert management and response automation become embedded operational processes. The integrations you build into your identity, cloud, and endpoint management systems create dependency.

The mitigation is not to avoid AI security platforms. It is to build with portability in mind. Use open standards where available. Document your workflows in a platform-agnostic way. Evaluate your vendor’s API capabilities and data export options before you sign the contract.

Why Human Judgment Still Cannot Be Automated Away

I want to be direct about this because I think it is the most important point in this entire article.

AI in cybersecurity is extraordinarily powerful. It detects faster, correlates better, and responds more quickly than any human team could at scale. But it does not understand context the way an experienced security professional does.

When a AI platform flags unusual access to a sensitive database at 2am, the automated response might be to isolate the account and trigger an incident. But a senior security analyst might know that the organisation just launched an emergency patching cycle and the database team is working overnight. That context, drawn from organisational knowledge that has never been codified into any system, changes the appropriate response entirely.

The judgments that matter most in enterprise cybersecurity, deciding whether a particular risk is acceptable given business context, determining whether a response action will cause more operational disruption than the threat it is containing, communicating the security implications of a business decision to non-technical stakeholders, these require human experience, organisational knowledge, and ethical reasoning that AI cannot replicate.

FAQ

❓ What is the role of AI in cybersecurity for enterprise IT teams?

AI enables enterprise IT security teams to detect threats faster, correlate alerts across complex multi-system environments, automate initial incident response actions, and shift from reactive to predictive security postures. For large enterprises with thousands of endpoints and significant data volumes, AI is now a fundamental operational requirement rather than an optional enhancement.

❓ How are hackers using AI to launch more sophisticated cyberattacks?

Hackers use AI to generate personalised phishing content at scale, automate vulnerability discovery in software systems, create deepfake audio and video for executive impersonation fraud, and accelerate the development of malware that can evade signature-based detection. The same AI technologies available to defenders are accessible to attackers, which is what makes this moment in cybersecurity uniquely challenging.

❓ What are the biggest risks of using AI in enterprise cybersecurity?

The biggest risks are high false positive rates that create alert fatigue, AI models that cannot explain their decisions in regulated environments, vendor lock-in from integrated AI security platforms, overreliance on AI that reduces investment in human expertise, and the misapplication of AI tools to environments they have not been properly calibrated for.

❓ Which AI-powered cybersecurity tools are most effective for enterprises?

CrowdStrike Falcon, Darktrace, and Microsoft Defender for Endpoint are among the most widely deployed AI-powered security platforms in enterprise environments. The most effective tool depends on your existing infrastructure, industry compliance requirements, integration needs, and the specific threat vectors most relevant to your organisation.

❓ Is AI in cybersecurity reliable enough for regulated industries like pharma or finance?

Yes, with appropriate architecture. Regulated industries require explainable AI outputs, full auditability of automated decisions, human override capability, and compliance with data residency requirements. These are design constraints that well-architected AI security implementations can satisfy, but they require deliberate planning from the beginning of the deployment, not as an afterthought.

🎯 Conclusion

The role of AI in cybersecurity is not coming. It is already here, operating on both sides of the battlefield simultaneously.

Hackers have access to the same generative AI, the same automation tools, and the same vulnerability discovery capabilities that your security team is deploying. The difference between an organisation that manages this well and one that does not is not which AI tools they have purchased. It is how thoughtfully they have deployed them, how rigorously they have governed them, and how clearly they have defined the boundary between what AI handles autonomously and what experienced humans must own.

From my experience across two decades in enterprise IT, the technology in any given wave is rarely the hard part. The governance, the integration, the people, and the decision-making frameworks are where enterprises succeed or fail.

AI in cybersecurity is no different.

Start by auditing where your security team currently spends the most time on high-volume, low-judgment tasks. That is your first AI automation opportunity. Layer AI-driven behavioural analytics into your existing monitoring infrastructure before replacing it. Invest in making your security analysts smarter with AI tools rather than replacing their judgment with AI decisions.

And never forget: the most sophisticated AI security platform in the world is only as effective as the experienced human team that governs it, interprets its outputs, and takes responsibility for the decisions it informs.

About the Author:

I am a seasoned IT professional in recent years I have invested seriously in understanding the practical application of generative AI in enterprise IT, including formal training in GitHub Copilot, ChatGPT prompting techniques, Microsoft Copilot, and AI agent architectures. I use these tools actively on live projects and write about them from the perspective of a practitioner, not a theorist.

I write at technofril.com to share experience-based insights on technology that actually work in real enterprise environments, the kind of perspective you do not find in vendor documentation or conference keynotes.

You can also explore other articles of mine which may be of interest to you.

👉 Know it all series for what is AI