Ethical Dangers of AI – Introduction

Most people talk about artificial intelligence like it is purely a story of progress. Faster decisions. Smarter automation. Endless productivity gains,But there is another story. A quieter one. And it involves the ethical dangers of artificial intelligence that rarely make it into the vendor presentations, the LinkedIn posts, or the executive briefings.

AI is the fastest, widest wave I have ever seen. And hidden beneath its surface are ethical risks that could cost organisations — and individuals — far more than a failed implementation.

This article is the honest version of that conversation.

Table of Contents

What Are the Ethical Dangers of AI?

Let me be direct with you. When most people hear “AI ethics,” they picture a philosophy lecture or a corporate values slide. Something abstract. Something theoretical.

Ethics in AI is not a soft subject. It is an operational risk category — and in many industries, a regulatory one.

The reason this matters more now than ever is scale. When a human makes a biased decision, it affects one person. When an AI model makes a biased decision at enterprise scale, it affects thousands — often without anyone noticing for months or years.

The obvious risks of AI get plenty of coverage. Job displacement. Deepfakes. Autonomous weapons. These are real concerns — but they are also visible. They spark public debate. They attract regulatory attention relatively quickly.

The ethical dangers of artificial intelligence that I am more concerned about — the ones I have seen emerge inside real enterprise environments — are the hidden ones. The ones that look like efficiency gains until they do not.

A demand forecasting model trained on historical retail data will perpetuate historical inequalities in stock distribution if nobody examines the training set. An AI-assisted performance review tool will encode whatever patterns existed in past promotion decisions — including any bias those decisions carried — and project them forward at scale.

These are not hypothetical scenarios. These are the natural consequences of deploying AI without ethical architecture.

📌 In Simple Words

The ethical dangers of artificial intelligence are not just about sci-fi robots making bad decisions. They are about real systems, trained on real data, making decisions at a scale and speed that makes human review difficult — and sometimes impossible — without deliberate design choices to prevent it.

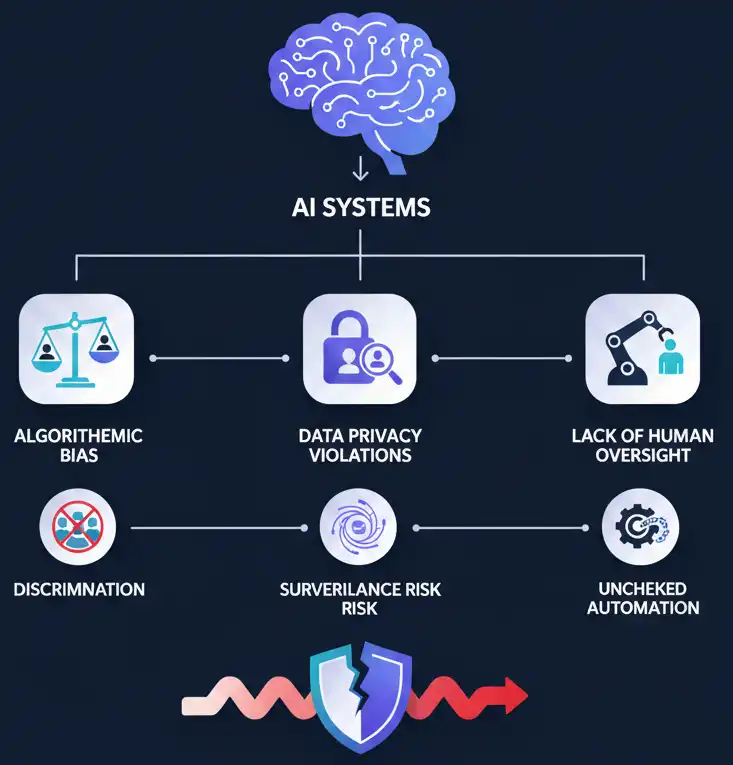

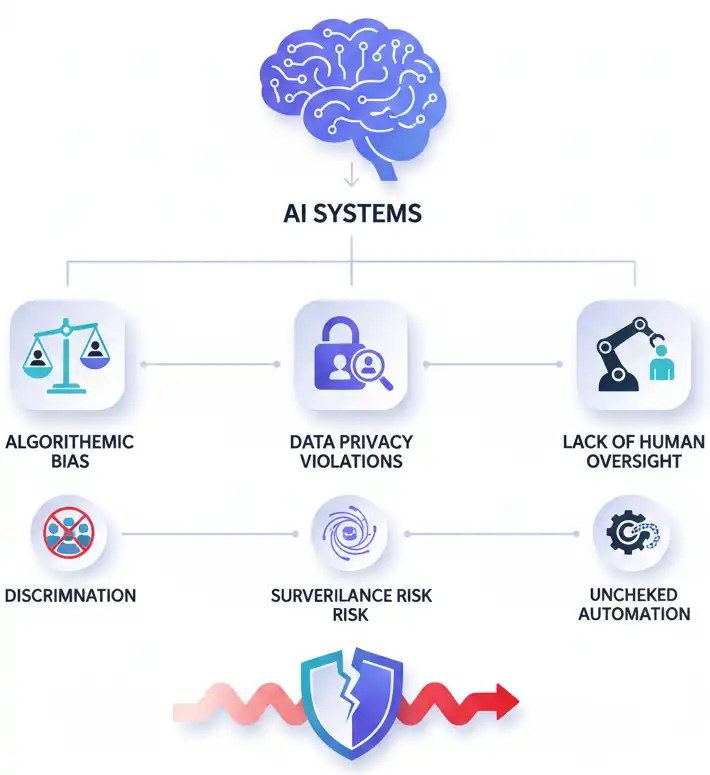

The Most Dangerous Ethical Risks AI Poses Today

Algorithmic Bias and Discrimination at Scale

This is the ethical danger that keeps me up at night more than any other. And it is the one that is most consistently underestimated in enterprise AI conversations.

Algorithmic bias occurs when an AI system produces systematically unfair outcomes for specific groups of people. It can emerge from biased training data, biased problem framing, biased feature selection, or biased evaluation criteria. It can be unintentional. It often is. And that is precisely what makes it so dangerous.

The Hidden Bias in the Machine

One of the most significant AI ethical risks I’ve encountered is the myth of machine objectivity. People assume that because a computer is making a choice, that choice is free of human prejudice.

📌From my experience, the opposite is often true.

How Data Training Creates Systematic Discrimination

AI models learn from historical data. If you feed a model twenty years of hiring data from an industry that historically favored one demographic, the AI will “learn” that this demographic is the only one qualified for the job.

In my time working across the Pharma and Retail sectors, I’ve seen how data quality determines everything. If the underlying data is skewed, the AI doesn’t just repeat the bias—it amplifies it. This leads to AI bias and discrimination that is often hard to detect until the damage is done.

Real-World Examples of AI Bias in Action

Think about credit scoring or insurance premiums. If an AI uses postal codes or “lifestyle data” as proxies for race or socioeconomic status, it can effectively “redline” entire communities without ever mentioning a protected characteristic.

❓ People also ask

Question: How does AI bias actually happen?

Answer: AI bias occurs when the data used to train a model contains human prejudices or reflects historical inequalities. The machine identifies these patterns as “rules” and applies them to new situations, often discriminating against specific groups without explicit instructions to do so.

Data Privacy and Surveillance Risks in AI

AI systems are hungry for data. The more data they receive, the better they perform. This creates a structural incentive — in organisations, in products, in platforms — to collect as much data as possible, retain it as long as possible, and apply it as broadly as possible.

This incentive is in direct tension with individual privacy rights.

📌 From my experience:

The data governance conversation is almost always reactive rather than proactive. Data is collected because it can be. Permissions are broad because they are easier to implement that way. Retention policies are vague because nobody wanted to have the hard conversation about deletion.

When you layer AI onto a data environment built with those assumptions, the privacy risks multiply significantly. AI models can infer sensitive attributes — health conditions, political views, financial stress — from datasets that were never intended to carry that information. A model trained on browsing behaviour and purchase history can predict pregnancy before the individual has told anyone. A model trained on employee communications metadata can infer union activity.

These are not theoretical capabilities. They are documented ones.

The ethical danger is not just that the data exists. It is that AI can extract meaning from it that the individuals who generated it never consented to.

The Accountability Gap: Who Is Responsible?

As a Project Manager in a multinational company, the first thing I look for in any project is the “Responsible Party.” If a Kafka stream fails or an AWS instance goes down, I know exactly which team to call. With AI, that line of accountability is dangerously blurred.

When the Algorithm Fails: Legal and Professional Risks

If an AI-driven medical diagnostic tool misses a tumor, or a self-driving car makes a fatal error, who is to blame? Is it the developer? The data provider? The company that deployed the tool? This “Accountability Gap” is one of the most pressing artificial intelligence ethics problems.

The Challenge of “Black Box” Decision Making

In enterprise environments, “Explainability” is a requirement. If a bank denies a loan, they must be able to explain why. However, many deep learning models are so complex that providing a “human-readable” reason for a decision is nearly impossible.

Deepfakes and the Erosion of Truth

In my exposure to the Media & Entertainment industry, content was always vetted. Today, AI can generate hyper-realistic images, videos, and audio—known as Deepfakes—at the touch of a button.

Social Manipulation and the Misinformation Crisis

The ethical dangers of AI extend far beyond the office. Deepfakes can be used to manipulate public opinion, influence elections, or destroy reputations. When we can no longer trust our eyes and ears, the fabric of digital society begins to fray.

How AI Impacts Digital Trust in Business

For a professional blogger or a business owner, “Trust” is the primary currency. If your audience suspects your content is entirely “bot-generated” without a human lens, you lose your E-E-A-T (Expertise, Authoritativeness, and Trustworthiness).

Moving Toward Responsible AI Development

We cannot put the AI genie back in the bottle. Instead, we must focus on responsible AI development.

The Importance of Governance and Transparency

Governance isn’t just about “rules”; it’s about creating a framework where AI is audited regularly. Much like we perform SQL injections tests or security audits on legacy systems, we must perform “Bias Audits” on our AI models.

Practical Steps for Human-Centric AI

- Human-in-the-loop: Never let an AI make a final high-stakes decision without human review.

- Diverse Data Sets: Ensure your training data represents the real world, not just a narrow slice of it.

- Transparency: Be open with your users about when and how AI is being used.

📌 In Simple Words

Responsible AI means using the tool to assist humans, not replace the human judgment that keeps things ethical and safe.

Conclusion

The ethical dangers of AI are not something to fear—but something to understand.

From bias and privacy risks to lack of transparency and accountability, AI presents challenges that we cannot ignore.

AI will make organisations faster, more capable, and more competitive. It will also — if deployed without ethical architecture — cause harm at a scale and speed that the old world of human decision-making never could.

The answer is not to stop. The answer is to be deliberate. To build governance before you build capability. To treat explainability, fairness, privacy, and accountability as design requirements rather than afterthoughts. To protect the human override principle even when volume and cost pressure push against it.

📌 From my experience

the organisations that will navigate this well are not necessarily the ones with the biggest AI budgets or the most sophisticated models. They are the ones with the clearest thinking about what they are actually building — and who bears the consequences when it goes wrong.

If there is one thing I want you to take from this article, it is this: the ethical dangers of AI are not someone else’s problem. They are an operational, legal, and human responsibility that every organisation deploying AI at any scale needs to own.

Start with one honest question: in your current AI deployments, if the model produced a harmful outcome today, would you know? Would anyone know?

If the answer is uncertain, that is where the work begins.

FAQ

❓What is the biggest ethical danger of AI today?

The most immediate danger is algorithmic bias, where AI systems unintentionally discriminate against individuals based on flawed or non-representative training data, leading to unfair outcomes in hiring, lending, and law enforcement.

❓What is the biggest ethical danger of AI today?

The most immediate danger is algorithmic bias, where AI systems unintentionally discriminate against individuals based on flawed or non-representative training data, leading to unfair outcomes in hiring, lending, and law enforcement.

❓How does AI threaten personal privacy?

AI threatens privacy through mass data harvesting and its ability to de-anonymize “hidden” data. Large Language Models can also inadvertently “remember” and leak sensitive information provided in user prompts during training.

❓Can we actually “fix” AI bias?

While we can’t perfectly eliminate bias, we can mitigate it through responsible AI development, using diverse datasets, conducting regular bias audits, and maintaining “human-in-the-loop” systems for critical decision-making processes.

❓Why is AI accountability so difficult?

Accountability is difficult because AI systems are often “Black Boxes.” When a complex algorithm makes an error, it is hard to pinpoint whether the fault lies with the code, the training data, or the user’s instructions

❓What are “Deepfakes” and why are they an ethical concern?

Deepfakes are AI-generated media that look and sound like real people. They pose a major ethical risk by facilitating misinformation, fraud, and the erosion of public trust in digital communications.

About the Author

I am a technology professional with over two decades of hands-on experience in IT.

I write to share what I have actually learned.The ethical dangers of AI are not abstract concerns to me. They are the next chapter of a story I have been living inside for a very long time

Today, I actively explore emerging technologies like Generative AI, ChatGPT prompting, and AI productivity tools. My passion lies in simplifying complex technical concepts and helping readers understand not just how technology works—but how it impacts their lives.

You can also explore various other articles blogs of mine as below which may be of interest to you.

👉 Know it all series for what is AI