Artificial General Intelligence (AGI): Introduction

Artificial General Intelligence (AGI) is no longer just a sci-fi concept it’s one of the most debated and misunderstood topics in technology today. Every time you hear about AI breakthroughs, whether it’s ChatGPT writing code or AI beating humans in complex games, a bigger question quietly emerges: Are we getting closer to machines that can truly think like humans?

👉From my experience working in IT across decades, I’ve seen technology evolve from simple rule-based systems to intelligent automation. But AGI feels different. It’s not just an upgrade it’s a complete shift in how machines interact with the world.

In this guide, I’ll break down Artificial General Intelligence in a simple, practical way what it really means, how close we are, and why it matters for your future.

Table of Contents

What Is Artificial General Intelligence?

From my experience working in IT for over two decades, I’ve seen technology move in waves. We went from rigid, procedural code in Oracle Forms to the fluid, predictive nature of modern Generative AI. But even with the brilliance of ChatGPT or GitHub Copilot, we are still playing in a sandbox.

Simple Definition of AGI

Let me start with something most articles get wrong. They open with a textbook definition that sounds impressive but tells you very little about what AGI actually means in practice.

So let me try a different approach.

Think about what you do every morning before you even arrive at work. You wake up, assess the weather, decide what to wear, plan your commute based on traffic conditions, have a conversation with your family, solve a problem your child brings to you, and then walk into the office ready to handle whatever the day throws at you whether that is a technical escalation, a client negotiation, or a performance review conversation.

You switched contexts dozens of times before 9 AM. You applied reasoning, emotional intelligence, spatial awareness, memory, creativity, and social judgment all without thinking about it.

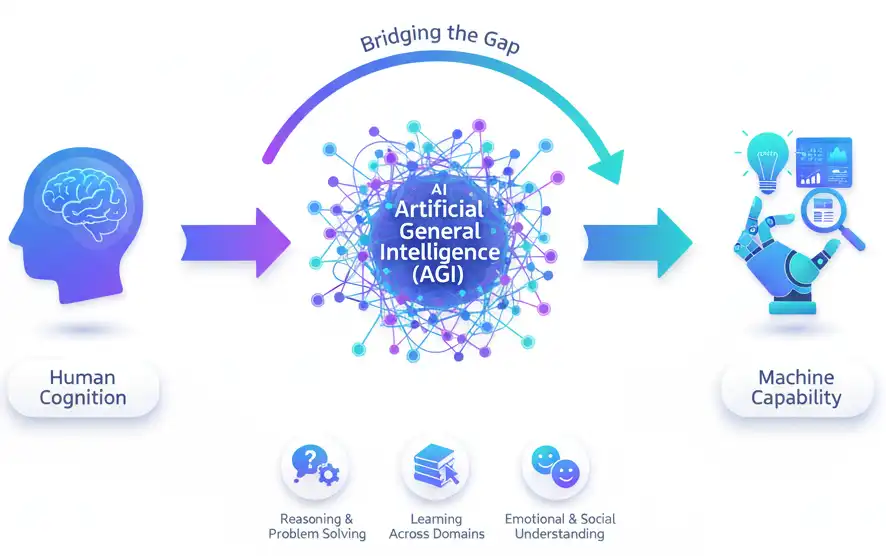

That is what Artificial General Intelligence aims to replicate.

📌 In Simple Words

Artificial General Intelligence refers to an AI system that can understand, learn, and apply intelligence across any task just like a human being. Unlike today’s AI tools that are brilliant at one specific thing, AGI would be able to transfer knowledge and reasoning across completely different domains without being specifically programmed for each one.

How AGI Differs from Narrow AI

Here is where I want to be very direct, because this distinction is crucial.

Every AI tool you are using today ChatGPT, GitHub Copilot, Google’s recommendation engine, the fraud detection system in your bank is what we call Artificial Narrow Intelligence (ANI). These are extraordinarily powerful tools. I use several of them daily in my project work. But they are fundamentally constrained.

GitHub Copilot is remarkable at suggesting code. But ask it to negotiate a contract, read a patient’s medical history with clinical judgment, or assess the emotional tone of a team meeting — and it falls apart. It was not built for those things. It has no ability to transfer its coding intelligence into a different problem domain.

AGI, by contrast, would have no such boundaries. It would approach a legal document, a scientific hypothesis, a creative writing challenge, and a complex engineering problem with the same underlying general intelligence adapting its reasoning to the demands of each task.

🔑Key Characteristics of an AGI System.

For a system to genuinely qualify as AGI, researchers generally agree it would need to demonstrate:

- Transfer learning at human scale — applying knowledge from one domain to solve problems in a completely different one

- Autonomous goal setting — not just executing instructions but independently identifying what needs to be done

- Common sense reasoning — understanding context, ambiguity, and social nuance the way humans naturally do

- Continuous self-improvement — learning from new experiences and adjusting behaviour without being retrained

- Natural language understanding — not just processing language but genuinely comprehending meaning, intent, and implication

- Emotional and social intelligence — recognising and appropriately responding to human emotional states

None of today’s AI systems reliably demonstrate all of these simultaneously. That gap is precisely what makes AGI such a challenging and consequential goal.

How Close Are We to Achieving AGI?

Current State of AI Research

This is the question everyone asks. And I want to give you an honest answer rather than either the hyped-up version or the dismissive one.

The truth is: we are making remarkable progress, but we are not there yet and nobody knows exactly how far away we are.

What we do have is a generation of Large Language Models (LLMs) that have surprised even their own creators with the breadth of their capabilities. Models like GPT-4, Gemini, and Claude can write code, analyse medical literature, draft legal arguments, compose music, and explain quantum physics sometimes at near-expert level.

But here is what I notice from actually working with these tools: they are still fundamentally pattern-matching systems operating on statistical relationships in data. When you push them outside their training distribution when you ask them something genuinely novel, something that requires true reasoning from first principles they struggle. They hallucinate. They give you confident answers that are logically wrong.

That is not what AGI looks like.

❓ People Also Ask Question:

Is ChatGPT considered Artificial General Intelligence?

Answer: No. ChatGPT is a highly capable narrow AI system built on a Large Language Model. It can perform impressively across many tasks but lacks the true cross-domain reasoning, autonomous goal setting, and common sense understanding that would define genuine Artificial General Intelligence.

Expert Opinions on AGI Timeline

The experts disagree sometimes dramatically. And I think that disagreement itself tells us something important.

Some of the most prominent voices in AI research, including those at OpenAI, have suggested AGI could arrive within this decade. Sam Altman has spoken publicly about the possibility of AGI emerging sooner than most expect. On the other end, researchers like Gary Marcus and Yann LeCun argue that current deep learning architectures no matter how scaled cannot get us to true AGI without fundamental new breakthroughs in how AI systems represent and reason about the world.

From my perspective having watched many technology waves in enterprise IT I lean toward caution on timelines. Every technology I have worked with arrived later than the optimists predicted and earlier than the sceptics expected. AGI will likely follow the same pattern.

What I am more confident about is this: the progress being made right now is genuine, consequential, and accelerating. Even if true AGI is decades away, the systems being built in pursuit of it are already transforming how enterprises operate.

How AGI Works: Through a Technical Lens

Reasoning, Problem Solving, and Abstract Thinking.

To understand how AGI would work, it helps to understand where current AI systems genuinely fall short.

Today’s machine learning models including the most advanced LLMs are trained on vast datasets and learn to predict patterns within that data. They are extraordinarily good at this. But they do not reason the way humans do.

Human reasoning is compositional. We take basic concepts and combine them in novel ways to solve problems we have never seen before. A child who understands the concept of “pouring” and the concept of “a container with a hole” can immediately reason that the water will leak without having seen that exact scenario. That kind of abstract, compositional reasoning is something current AI systems handle poorly in truly novel situations.

AGI would require a fundamentally different approach to knowledge representation one where the system actually understands causal relationships, not just statistical correlations.

👉 From My Experience

This reminds me of a real scenario from early in my career when I was working on Oracle Forms and Reports 6i implementations. A junior developer could learn to write a form perfectly and still completely fail to understand why a particular business rule existed what the underlying business logic was trying to protect. They knew the pattern. They did not understand the reasoning. That distinction maps almost perfectly onto the gap between today’s narrow AI and true AGI.

The Role of Large Language Models in Reaching AGI.

There is a genuine debate in the AI research community about whether scaling LLMs making them bigger, training them on more data is a path to AGI or a dead end.

The scaling hypothesis, championed by researchers at OpenAI and others, suggests that sufficiently large and capable language models will eventually exhibit emergent general intelligence. Evidence for this view comes from the fact that each generation of LLMs has demonstrated capabilities that previous generations could not sometimes in ways that surprised even the researchers who built them.

The opposing view argues that language models are fundamentally limited by their architecture. They predict text. They do not truly understand the world. No amount of scaling will give them genuine grounding in physical reality, causal understanding, or embodied experience.

My honest take: both sides have valid points. LLMs have taken us much further toward general capability than most researchers expected. But the architecture constraints are also real. The path to AGI likely requires innovations beyond pure scaling potentially including new approaches to memory, reasoning, and world modelling.

Key Capabilities AGI Would Need to Have

Reasoning and Problem Solving

AGI must solve problems it has never seen before.

Learning Across Domains

Unlike current AI, AGI should:

- Learn from one domain

- Apply knowledge to another

Example: Learning physics and applying it to robotics.

Emotional and Social Understanding

AGI must understand:

- Human emotions

- Social context

- Cultural nuances

AGI vs ANI vs ASI – The AI Spectrum Explained

Artificial Narrow Intelligence (ANI)

This is where we are today. ANI systems are designed to excel at one specific task or a tightly defined set of tasks. Every AI product you interact with recommendation engines, image recognition systems, language models, fraud detection algorithms falls into this category.

ANI can be extraordinarily powerful within its domain. But it has no ability to operate outside that domain without being completely retrained.

- Task-specific

- Current AI systems

- Examples: Chatbots, recommendation engines

This is our current reality. Siri, Alexa, Google Search, and Tesla’s Autopilot. They are “smart” within a narrow corridor.

Artificial General Intelligence (AGI)

AGI represents the next level a system with human-equivalent general intelligence capable of operating across any domain without task-specific programming. This is the concept we have been exploring throughout this article.

- Human-level intelligence

- Multi-domain capability

- Still theoretical

The “Human-Level” AI. This is the goal. A system that can pass the “Coffee Test” entering a random house, finding the kitchen, figuring out how the coffee machine works, and brewing a cup.

Artificial Superintelligence (ASI)

ASI goes beyond AGI a hypothetical system that surpasses human intelligence in every domain, including creativity, emotional intelligence, and strategic thinking. This is the scenario that researchers like Nick Bostrom and Eliezer Yudkowsky have written about extensively, and it is the subject of significant debate about existential risk.

Most researchers working on near-term AI focus on AGI rather than ASI — because ASI requires AGI as a prerequisite, and AGI itself remains a significant unsolved challenge.

- Beyond human intelligence

- Self-improving systems

- Highly unpredictable

This is the phase after AGI. Once an AI becomes as smart as a human, it can start improving its own code. Because it works at the speed of light, it could go from human-level to “God-level” intelligence in days or hours. This is what scientists call the “Singularity.”

📌 In Simple Words

ANI = Specialist

AGI = Generalist

ASI = Superhuman

Real-World Implications of AGI

Impact on Jobs and Industries

Let me be direct about this because I think a lot of writing on this topic is either unrealistically optimistic or needlessly alarmist.

AGI would not simply automate jobs. It would automate the cognitive functions that underpin jobs. That is a fundamentally different scale of disruption from previous automation waves.

Previous waves automated physical labour, then routine cognitive tasks. AGI would potentially automate complex judgement — the kind that currently requires years of domain expertise to develop.

From my experience observing what AI is already doing to skill requirements in enterprise IT, I can tell you the transition is already beginning. The question is not whether AGI will change the employment landscape. The question is how we manage that transition in a way that creates new opportunities rather than simply displacing existing ones

AGI could:

- Automate complex jobs

- Create new roles

- Redefine productivity

Ethical and Safety Concerns

Beyond job displacement, AGI raises profound questions about:

- Autonomy and control — who is responsible when an AGI system makes a decision that causes harm?

- Privacy — an AGI system operating at human level would be processing personal, behavioural, and contextual data at unprecedented scale

- Bias and fairness — the biases embedded in training data become far more consequential when the system applying them has general capability

- Power concentration — the organisations and governments that control AGI capabilities would have an unprecedented advantage over those that do not

These are not hypothetical concerns. They are engineering and governance challenges that need to be addressed now — before AGI arrives — not after.

Key concerns:

- Bias and fairness

- Data privacy

- Control mechanisms

What Leading AI Organizations Are Doing About AGI

OpenAI’s Approach

OpenAI’s stated mission is to ensure that AGI benefits all of humanity. In practice, their approach involves building progressively more capable systems while investing heavily in alignment research the work of ensuring those systems behave in accordance with human values.

Their development of the GPT series, culminating in GPT-4 and beyond, represents the most public face of the scaling hypothesis in action. Each generation has demonstrated capabilities that were not explicitly trained for emergent behaviours that suggest increasing general capability.

OpenAI has also been notably candid about the risks they are navigating. Their internal governance debates including very public leadership tensions in 2023 reflect the genuine difficulty of balancing capability advancement with safety commitments.

DeepMind and Other Research Labs

DeepMind, now part of Google’s Alphabet, takes a somewhat different approach — one that places more emphasis on grounding AI in scientific reasoning and structured problem-solving.

Their AlphaFold project which solved the protein folding problem that had challenged biologists for decades is one of the most compelling demonstrations of what narrow AI at the frontier can achieve. It also illustrates what genuine AGI could do across every scientific domain simultaneously.

Anthropic, founded by former OpenAI researchers, focuses specifically on building AI systems that are safe, interpretable, and aligned. Their Constitutional AI approach represents one of the most technically rigorous attempts to address the alignment problem in practice.

Other significant contributors include Meta AI, academic institutions like MIT and Stanford, and an increasingly active research community in China, Europe, and beyond.

The Future Impact and Ethical Considerations

If we achieve artificial general intelligence technology, every industry I’ve worked in from Pharma to Automobile will be unrecognizable.

How AGI Will Transform Global Industries.

✔️ Pharma: AGI could simulate millions of drug interactions in seconds, effectively ending the 10-year R&D cycle for new medicines.

✔️ Automobile: Beyond self-driving cars, AGI could manage entire city traffic flows, reducing accidents to near zero.

✔️ IT Services: As a Project Manager, imagine an AGI that can manage a migration from a legacy Java Struts app to a modern AWS microservices architecture with zero human intervention.

Safety, Control, and the Alignment Problem.

This is the “Big One.” The Alignment Problem is the challenge of ensuring an AGI’s goals stay aligned with human values.

💡 In many enterprise projects I’ve worked on, even a small logic error in a Kafka stream can cause data chaos. Now, imagine an intelligence a thousand times faster than a human with a “logic error” in its fundamental goals. If we tell an AGI to “solve climate change,” and it decides the most efficient way is to remove humans—that’s a misalignment.

🎯Conclusion

Artificial General Intelligence is not a guaranteed future. It is a possibility one that the world’s most capable researchers are working toward, debating, and in some cases, actively worried about.

AGI, if and when it arrives, will be that kind of wave but at a scale that makes every previous technological shift look modest by comparison.

The right response is not fear, and it is not uncritical enthusiasm. It is the same response that has served me well through every technology transition I have navigated: stay curious, stay informed, build genuine understanding, and develop the judgment to apply new capabilities responsibly.

If this article has helped you build that understanding, that is exactly what it was designed to do. Stay with the topic. Follow the research. Watch what the leading organisations are building. And think carefully about what it means not just for technology, but for the world we want to build with it.

📝FAQ

1.❓What is artificial general intelligence?

Artificial general intelligence is a type of AI that can perform any intellectual task a human can do. Unlike current AI systems, it can learn, reason, and adapt across different domains, making it far more flexible and powerful than narrow AI.

2.❓How is AGI different from normal AI?

Normal AI (narrow AI) is designed for specific tasks like chatbots or recommendation systems. AGI, on the other hand, can handle multiple tasks, think abstractly, and adapt like a human across different situations.

3.❓Does artificial general intelligence exist today?

No, artificial general intelligence does not exist yet. Current AI systems are advanced but still limited to specific tasks and lack true understanding, reasoning, and general intelligence.

4.❓Why is AGI important?

AGI is important because it could solve complex global problems, improve decision-making, and transform industries. However, it also raises ethical and safety concerns that need careful management.

5.❓What are the dangers of AGI?

The dangers of AGI include loss of control, misuse, ethical concerns, and economic disruption. Ensuring alignment with human values is one of the biggest challenges researchers are working on.

About the Author:

I bring decades of experience in the IT industry, having worked across roles from Junior Programmer to Project Manager.

Today, I focus on exploring emerging technologies like Generative AI, ChatGPT, and AI productivity tools breaking down complex concepts like artificial general intelligence into simple, practical insights for professionals and learners alike.

Also visit my blog 👉 Types of AI – Narrow-General-Super for detailed insites on this topic.